By Akash Goyal –

What are Face Filters?

The new generation of technology has always been a blessing, especially for Social networks. News can be shared faster than the fire, Multiple Files get sent in Single click and thanks to modern apps, one can easily play with photos & make something amazing out of it. Speaking of photos, We all like to scroll through social media apps for the trending cool facial image and it’s really amazing to see the perfect static masking, painting, makeup or any other animated effect? Like the infamous snapchat dog tongue effect? That’s a face filter in action.

Face filters are augmented reality effects enabled with face recognition technology. It applies/overlays/blends 3D objects and animation on the face in real-time, so you can capture engaging videos and photos.

Popular Apps & Cool Examples

MSQRD

Launched at a time when other applications could only make changes to still pictures. MSQRD allows the users to modify their face in hilarious ways, all in real time, in an almost magical way. Take pictures or record short videos. Facebook purchased MSQRD in 2016 and it was removed from Play & App Stores in 2020.

Muglife

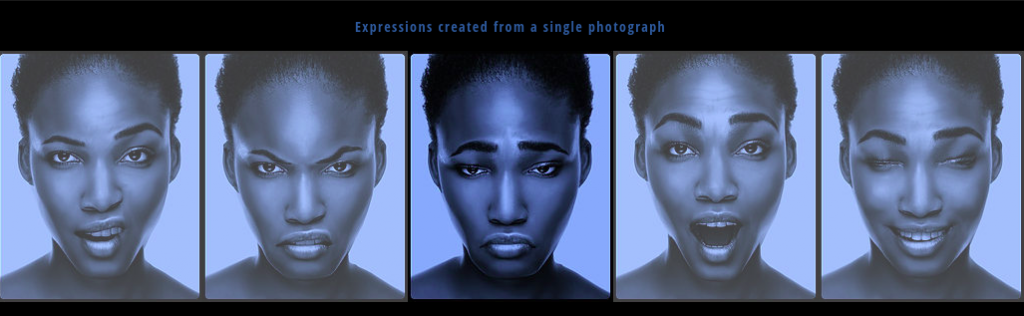

Launched in 2017, this is a one of its kind, obscenely juvenile, but really powerful. Simple Photos turns into Super-Realistic 3D Animations, putting users in jaw dropping moments.

Snapchat

Snapchat

The decade-old, multimedia instant messaging app. Express yourself quickly with millions of lenses. The snap filters `lenses` are very creative and have a uniqueness in the space.

Instagram

Instagram

Photo and video sharing social networking application, now acquired by Facebook, lets you express yourself in new ways with many Insta filters and Reels features.

Technology in use

Technology in use

All these apps use a MULTITUDE OF TECHNOLOGIES – Artificial Intelligence techniques combined with Augmented Reality, Computer graphics expertise with the latest advances in computer vision. To name a few – Landmark Detection, Segmentation, Active Shape Modeling, Triangulation, etc.

Augmented Reality

Blend the digital and physical worlds. AR users experience the real-world environment with generated perceptual information overlaid on top of it. AR Filters are digitally based on responsive interactions that are applied to the user’s face or environment to enhance or modify what is being seen in the real world. Brands and social networks (SNs) are using augmented reality (AR) filters more frequently to provide users with best experiences.

Artificial Intelligence

Simulation of intelligence in machines. AI is intelligence demonstrated by machines. the ability of a digital computer or computer-controlled robot to perform tasks commonly associated with intelligent beings

Segmentation

Grouping similar regions or segments of an image under their respective class labels.Digital images are divided into several subgroups known as “image segments,” which serves to simplify subsequent processing or analysis of the image by decreasing the complexity of the original image.Segmentation in easy words is assigning labels to pixels

Active Shape Model

Statistical models of the shapes of objects which iteratively deform to fit an object in a new image.The shape of an object is represented through landmarks which are one chain of consecutive traits points, each of which is important, point existent in most of the images being considered

Facial Points Detection

Predict keypoint positions on face images. This can be used as a building block in several applications, such as tracking faces in images and video analyzing, facial expressions, detecting dysmorphic facial signs for medical diagnosis, biometrics / face recognition

Detecting facial keypoints is a very challenging problem. Facial features vary greatly from one individual to another, and even for a single individual, there is a large amount of variation due to 3D pose, size, position, viewing angle, and illumination conditions.

The technology of MUGLIFE as described in their official website.

Mug Life technology is broken into three stages:

1). Deconstruction – In the Deconstruction stage, Mug Life uses computer vision technology to analyze and decompose photos into 3D building blocks: camera, lighting, geometry, and surface texture.

2). Animation – In the Animation stage, we use state-of-the-art cinema animation techniques to manipulate the extracted 3D data, while preserving photorealism.

3). Reconstruction – Finally, in the Reconstruction stage, we leverage cutting edge video game technology to re-render your photo as an animated 3D character with stunning quality and detail.

The technology of MSQRD app is believed to play a key role in boosting Facebook’s internal portfolio of AR image and video tools, the SparkAR. Significant contribution is believed to be by LOOKSERY, which was acquired by SnapChat, in their lenses and SnapML tool.

Creating a Simple Face Filter

Creating a lens/filter of your own is easy for these apps. Plug in a model to apply art style to the camera feed, use custom segmentation masks, attach images to custom detected objects, understand what is in the scene, and more, using SnapML(for SnapChat), SparkAR(for Meta).

## import packages

import cv2

import numpy as np

## load haar files

face_cascade = cv2.CascadeClassifier(‘haarcascade_frontalface_default.xml’)

eye_cascade = cv2.CascadeClassifier(‘haarcascade_eye.xml’)

## method definition

def placeSticker(imgPath, stickerPath):

img, st = cv2.imread(imgPath), cv2.imread(stickerPath)

orig_st_h, orig_st_w, st_channels = st.shape

img_h, img_w, img_channels = img.shape

img_gray = cv2.cvtColor(img, cv2.COLOR_BGR2GRAY)

st_gray = cv2.cvtColor(st, cv2.COLOR_BGR2GRAY)

#use THRESH_BINARY_INV if sticker has transparent background

ret, orig_mask = cv2.threshold(st_gray, 10, 255, cv2.THRESH_BINARY)

orig_mask_inv = cv2.bitwise_not(orig_mask)

#find faces in image using classifier

faces = face_cascade.detectMultiScale(img_gray, 1.3, 5)

for (x,y,w,h) in faces:

face_w, face_h = w, h

Face_x1, face_y1 = x, y

face_x2 = face_x1+face_w

face_y2 = face_y1+face_h

#sticker size wrt face

st_width = int(1.5 * face_w)

st_height = int(st_width * orig_st_h/orig_st_w)

# sticker position coordinates

st_x1 = face_x2 – int(face_w/2) – int(st_width/2)

st_x2 = st_x1 + st_width

st_y1 = face_y1 – int(face_h*0.75)

st_y2 = st_y1 + st_height

# out of frame check & update

st_x1 = 0 if st_x1<0 else st_x1

st_y1 = 0 if st_y1<0 else st_y1

st_x2 = img_w if st_x2>img_w else st_x2

st_y2 = img_h if st_y2>img_h else st_y2

st_width = st_x2 – st_x1

st_height = st_y2 – st_y1

#resize sticker

st = cv2.resize(st, (st_width,st_height), interpolation = cv2.INTER_AREA)

mask = cv2.resize(orig_mask, (st_width,st_height), interpolation = cv2.INTER_AREA)

mask_inv = cv2.resize(orig_mask_inv, (st_width,st_height), interpolation = cv2.INTER_AREA)

# placing sticker into bg

roi = img[st_y1:st_y2, st_x1:st_x2]

roi_bg = cv2.bitwise_and(roi,roi,mask = mask)

roi_fg = cv2.bitwise_and(st,st,mask=mask_inv)

dst = cv2.add(roi_bg, roi_fg)

img[st_y1:st_y2, st_x1:st_x2] = dst

cv2.imwrite(imgPath+’res.png’,img)

## test code

imgPath = ‘image1.jpg’

stickerPath = ‘sticker2.png’

placeSticker(imgPath, stickerPath)

From applying styles on static media to processing a variety of media with effects in real-time; Face Filters have actually come a long way in a short time. It might evolve to such a stage that is not imagined yet. The evolution has expressed creativity and posed challenges as well. The same effects can be used to make or break somebody’s mood. Open technology should be used responsibly to make the world a better place to live in.

I hope this blog has added well to your understanding of the popular apps & their face filter features. Stay tuned to this space for more technical insights on the topic.

Leave a comment